Unbalanced Two Way ANOVA Calculator

No repeated measures - a repeated measure occurs when the same subjects are measured under multiple conditions or at multiple time points.

Two factor ANOVA with replication - enter all the replications in one cell separated by Enter or , (comma).

ANOVA without replication - enter one value per cell.

The tool ignores empty cells or non-numeric cells.

Unbalanced two way ANOVA calculator

Models

There are many possible models, this calculator deal currently only with the following balanced models:- Fixed effect model (A-Fixed, B-Fixed), no repeats - both factors are fixed.

- Mixed effect model (A-Random, B-Fixed), no repeats - factor A is random, factor B is fixed, each subject is measured only once.

- Mixed effect model (A-Fixed, B-Random), no repeats - factor A is fixed, factor B is random, each subject is measured only once.

- Random effect model (A-Random, B-Random), no repeats

Design

Balanced design

The balanced design has the same number of observations in each cell - each combination of factor.

Since there are no overlaps between any combination of the factors and the interaction : SST = SSA + SSB + SSAB + SSE.

SST

Unbalanced design

When the model is unbalanced, it leads to correlations. When the distribution of data between the cells matches the population distribution, the correlation will exist only between each factor and the interactions. However, when the distribution of data between the cells does not match the population distribution, correlations will occur both between each factor and the interactions, and among the factors themselves.

When correlation exists, there is overlap among the sum of squares (SS). If we calculate the SS as we do for the balanced model, the result will be incorrect, leading to a SS larger than the actual SS. Hence, you don't know how to allocate the shared SS between the two factors and between the factors and the interaction. There are several methods for dealing with the shared sum of squares.

Unbalanced design - Type I (Sequential Sum of Squares):

Type I - sequential, the first some of squares (SS) you calculate get the shared some of squares. In this case the order is matter!. Following the sum of squares formulas:

Factor A: SSA.

Factor B: SSB|A = SSR(y = β0 + β1A + β2B) - SSA.

Interaction AB: SSAB|A,B = SSR(y = β0 + β1A + β2B + β3AB) - SSR(y = β0 + β1A + β2B).

Unbalanced design - Type II (Partial Sum of Squares):

Type II - conservative, it assumes there is no interaction between the factors, it ignores the shared SS between the factors. Following the sum of squares formulas:

Factor A: SSA|B = SSR(y = β0 + β1A + β2B) - SSB.

Factor B: SSB|A = SSR(y = β0 + β1A + β2B) - SSA.

In this case we assume no interaction, but we also test it using the following:

Interaction AB: SSAB|A,B = SSR(y = β0 + β1A + β2B + β3AB) - SSR(y = β0 + β1A + β2B).

Unbalanced design - Type III (Marginal Sum of Squares)

Type III - assumes there is interaction between the factors, it ignores all the shared SS between the factors and between the factors and the interactions. Following the sum of squares formulas:

Factor A: SSA|B, AB = SSR(y = β0 + β1A + β2B + β3AB) - SSR(y = β0 + β1B + β2AB).

Factor B: SSB|A, AB = SSR(y = β0 + β1A + β2B + β3AB) - SSR(y = β0 + β1A + β2AB).

In this case we assume no interaction, but we also test it using the following:

Interaction AB: SSAB|A,B = SSR(y = β0 + β1A + β2B + β3AB) - SSR(y = β0 + β1A + β2B).

If the interaction does not exist in the population, the Type II method is a more powerful test than the Type III method"

Glossary

SST is the sum of squared differences between the dependent variable and the grand mean.

SSModel = SSA + SSB + SSAB + SSE.

For balanced model and Type I, SSModel = SST

Targets

The two way ANOVA test checks the following targets using sample data.- Checks if the difference between Factor A averages of two or more categories is significant

- Checks if the difference between Factor B averages of two or more categories is significant

- Checks if there is an interaction between Factor A and Factor B

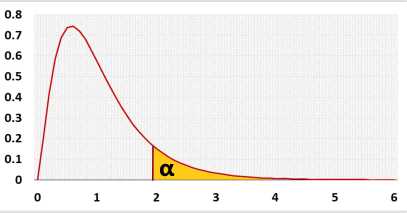

The F statistic represents the ratio of the variance between the groups and the variance inside the groups. Unlike many other statistic tests, the smaller the F statistic the more likely the averages are equal.

Two-way ANOVA

H0: Interaction(AiBj) = 0 (∀ i = 1 to a, j = 1 to b)

There is no interaction between variable A and variable B, i.e., for all the cells, the effect of variable A on the cells' means is not depend on the effect of variable B, and vice versa.

| Fixed Model | Mixed Model | Random Model | Mixed Repeated | ||||

| FA= | MSA | FA= | MSA | FA= | MSA | FA= | MSA |

| MSE | MSAB | MSAB | MSSWA | ||||

| FB= | MSB | FB= | MSB | FB= | MSB | FB= | MSB |

| MSE | MSE | MSAB | MSBSWA | ||||

| FAB= | MSAB | FAB= | MSAB | FAB= | MSAB | FAB= | MSAB |

| MSE | MSE | MSE | MSBSWA | ||||

Assumptions

- The dependent variable is continuous (ratio or interval)

- Two categorical independent variables

- Independent observations (no repeated measure)

- The residuals distribution is normal

- Homogeneity of variances, a similar variance for each cell

Required Sample Data

| Sample data from all compared groups |

Parameters

a - the number of categories in variable A, number of rows.b - the number of categories in variable B, number of columns.

ni - sample side of category i of variable A (row i).

nj - sample side of category j of variable B (column j).

ni,j - sample side of cell i,j (row i, column j). In the balance ni,j=n/(a*b)

n - overall sample side, includes all the groups (Σni,j, i=1 to a, j=1 to b).

Ȳi - average of all the observations of category i of variable A (row i).

Ȳj - average of all the observations of category j of variable B (column j).

Ȳ - overall average (ΣYi,j,k / n, i=1 to a, j=1 to b, k=1 to ni,j).

Repeated measures ANOVA

s - represent the order of subject in category i (subject 1 in category 1 is different than subject 1 in category 2)sub - number of subjects per cell, cell is one combination of variable A and variable B. For the balance design: N=a*b*sub.

Ȳi,s - subject's average, ΣYi,j,s for subject i,s ,the average of all the observations of subject s of category j of variable B (column j).

Ȳ - overall average (ΣYi,j,s / n

Results calculations

Sum of squares

The sum of squares accumulates the squared differences related to the effect we try to estimate.SSA - the squared differences related to the effect of variable A. You compare the average of every category to the total average. The same value as the sum of squares between groups in one way ANOVA.

SSB - the same as SSA, for variable B.

SSAB - the squared differences related to the effect of the combination of variable A and variable B in each cell, Since we try to understand the influence of the interaction AB, the interaction of the specific value of variable A and the specific value of variable B, we take the average of each cell, remove the influence of variable A and variable B, and compare to the total average.

A effect = Ȳi - Ȳ

B effect = Ȳj - Ȳ

AB effect = Cell average - A effect - B effect - Total average.

= Ȳi,j - (Ȳi - Ȳ) - (Ȳj - Ȳ) - Ȳ.

= Ȳi,j - Ȳi - Ȳj + Ȳ.

Take the square of each difference

Ȳi,j - Ȳi - Ȳj + Ȳ)2.

Count the square differences of each value in the cell, hence multiply by the sample size of each cell (ni,j).

SSAB=ΣiaΣjbni,j(Ȳi,j - Ȳi - Ȳj + Ȳ)2

Fixed and Random Effects

The fixed and random effects are related to the independent variables ().Fixed Effect

The effect is constant across individuals.- The categories of the variable contains the entire categories' list

- The effect of this variable is interesting. The difference between the categories is important

- There is no know pattern on the difference between the categories

Random Effect

The effect vary across individuals, the individuals may be people, products.- The categories' list is only a sample from the entire categories' list

- The effect of this variable is not interesting by itself. The difference between the categories is not important.

- There is no know pattern on the difference between the categories

A sample from the entire groups' population.

There is no pattern about the difference between the schools, and if there will be a pattern, it will be another factor, like school's size.

Each school is not important by itself.

ANOVA table - With interaction - Type II

| Source | Degrees of Freedom (DF) | Sum of Squares (SS) | Mean Square (MS) | F statistic | p-value |

|---|---|---|---|---|---|

| Factor A (rows) Between the categories of factor A | DFA = a - 1 | SSA = SS(A|B) = SS(A, B) - SS(B) | MSA = SSA / DFA | FA = MSA / MSE | P(x > FA) |

| Factor B (Columns) Between the categories of factor B | DFB = b - 1 | SSB = SS(B|A) = SS(A, B) - SS(A) | MSB = SSB / DFB | FB = MSB / MSE | P(x > FB) |

| Interaction AB Between the cells after reducing factor A and factor B effects | DFAB = (a - 1)(b - 1) | SSAB = SS(AB|A, B) = SS(A, B, AB) - SS(A, B) | MSAB = SSAB / DFAB | FAB = MSAB / MSE | P(x > FAB) |

| Error Within the cells | DFE = n - a*b | SSE=ΣiaΣjbΣkni,j(Yi,j,k - Ȳi,j)2 | MSE = SSE / DFE | ||

| Total All the deviations from the average | DFT = n - 1 | SST=ΣiaΣjbΣkni,j(Yi,j,k - Ȳ)2 SST=Sample Variance*(n-1) | MSE = S2 = SST / (n - 1) |

Sum of squares diagram - with interaction

This is the In the following diagram you may see the differences per each observation Yi,j,k that used to calculate the sum of squares.

A effect: Ȳi - Ȳ.

B effect: Ȳj - Ȳ.

Error: Yi,j,k - Ȳi,j.